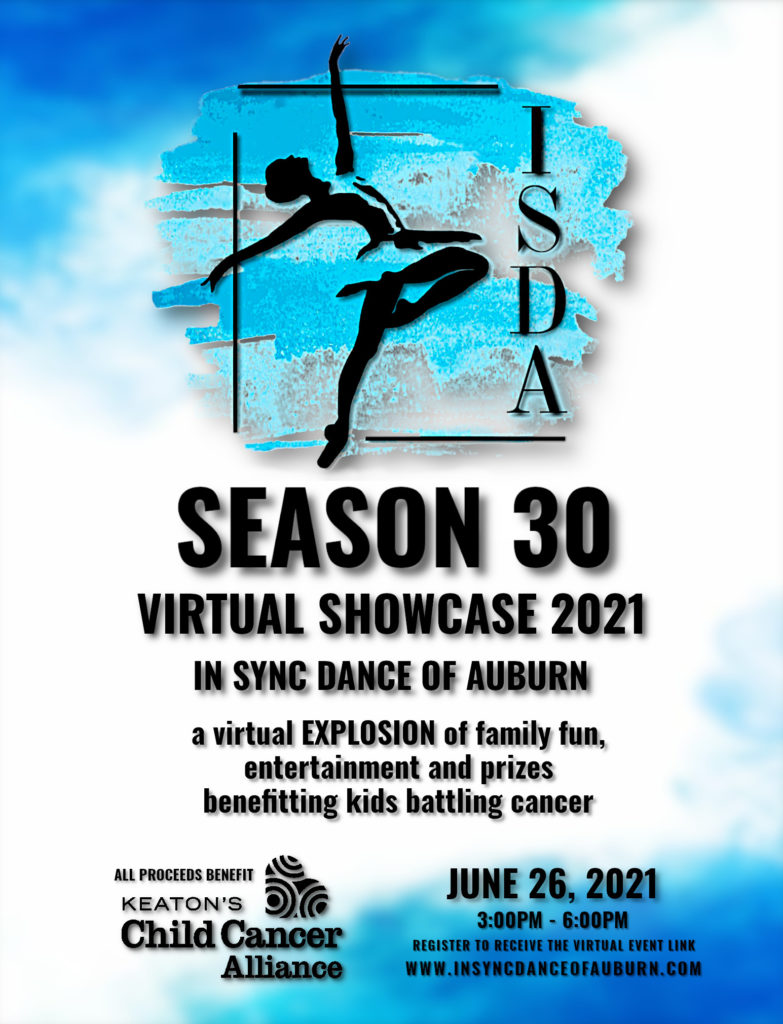

This past weekend I produced the live portion of In Sync Dance of Auburn’s virtual showcase. As all theaters have been closed to audiences for over a year, the studio decided to move the performances outdoors and film each class separately. That way the student’s work could still be shown, but with accommodation for distancing and keeping group sizes small.

Ultimately an hour and a half worth of video was created in music video-like format. To share the video with the world, the studio decided to produce a live stream event in partnership with Keaton’s Child Cancer Alliance. Keaton’s is the studio’s charity partner. The live stream premiered with people talking in real time, PowerPoint slides pre/post show, various videos played, viewer submitted “watch party” pictures, and finally all of the dance performance videos.

At the end of the day, the live stream performed well with minimal hiccups. Feedback has been positive all around.

Here’s how I set up everything to make the stream happen:

At the core of the stream is a Blackmagic Design ATEM Mini Pro. The ATEM was used to mix all of the various video sources together. All four HDMI inputs were used for different sources:

- Camera

- PowerPoint computer

- Playback computer

- Titling computer

Audio was handled by my Behringer X32 mixer. I decided to do all audio external to the ATEM as I prefer having more control than what the ATEM provided. Audio was routed from Main mix to Matrix 1 & 2 for additional tuning, then ultimately sent out the Aux 5 & 6 outputs on the back and into Mic 1 on the ATEM. I had to set the ATEM to accept line level audio sources. I didn’t like that I needed to supply audio to the ATEM over unbalanced stereo 1/8″ connectors. But in the end, the audio was clear and intelligible, so it ended up not being a big deal.

Microphones were simple wireless lapel models, with wireless hand mics on and nearby if needed. The receivers were located in the studio room and connected to a Behringer SD8 stagebox. This enabled carrying the four microphone channels back to the control room over a single CAT-5 cable. 100ms of delay was applied to each microphone channel to align the audio with the delays of the video signal.

The camera was a Canon VIXIA HF R800, chosen as it provides a “clean HDMI” output (video only, no extra menus or such on the HDMI connection). From the camera, a HDMI to SDI converter was used to send the video signal over a longer cable run. At the control room, a paired converter was used to convert the signal back from SDI to HDMI and ultimately fed the ATEM on channel 1.

PowerPoint was provided by using one of the studio’s staff computers, connected through HDMI. Music during the PowerPoint was a phone playing a selection of free to use music passed into the mixer on Aux 1 & 2.

Playback was provided by a spare computer I had. I installed VLC and enabled the Web interface. A playlist was created with all video segments set in order, so it was easy to pick which video to play based on index numbers within the playlist. The web interface also allowed easy control of play/pause, full screen, and other settings without risking showing controls on the screen during playback to the audience. Audio for playback was handled by an attached Behringer UCA222 USB interface connected to Aux 3 & 4 on the mixer. I tried using HDMI embedded sound at first, but the computer kept on being troublesome, so a separate interface was needed. I mapped Channel 15 & 16 on the mixer to receive audio from Aux 3 & 4 so I could have all inputs on a single layer of the mixer for quick access.

Titling used H2R Graphics. This program enabled us to run a countdown timer before the actual live portion began, a ticker for contact information, and selected YouTube comments all to be overlaid on top of the camera feed. The ATEM’s upstream keyer was used to chroma key the titling input on the camera feed. While titling this was worked out well, next time I need to learn how to enable titling using the downstream keyer, as it’s annoying to have to manually re-enable the keyer each time I changed video sources on the ATEM (ATEM macros were very spotty with enabling the keyer too and couldn’t be relied upon).

The ATEM HDMI output was fed to an HDMI splitter, providing the control room with a view of everything, but also feeding an HDMI to SDI link back to a monitor under the camera so the talent could also see pictures about to be featured or YouTube comments that we popped up on screen.

USB-C output of the ATEM was fed to a dedicated computer running OBS Studio for final encoding to YouTube at a 4500Mbps constant bit rate. I tried to use the ATEM’s built in encoder for the backup connection, but YouTube wants identical streaming parameters and I couldn’t get the ATEM configured properly. The initial motivation for using OBS was to enable closed captions for Deaf members of our audience. Captioning was performed using Web Captioner. I tried both the OBS integration and the YouTube (HTTP) integration and found that the HTTP integration provided a better result. That being said, YouTube’s captions were missing a lot of content that was being shown in Web Captioner coming from the source.

Final control over everything was provided by an additional computer running Bitfocus Companion and an attached Elgato Stream Deck. When I first learned about the Stream Deck a year ago, I thought it was silly. But seeing some YouTube videos about it’s usefulness during streaming I decided to give it a try. This tool changed everything and made the event very smooth. I was able to set up buttons to do things like switch to the camera on the ATEM and unmute the mic DCA on the X32. Another button changed to the playback input, faded to it, muted the mic DCA and unmuted the playback DCA. Additional buttons were used to select each video segment for quick access.

During earlier iterations of getting ready for this event, I purchased a Blackmagic Designs HyperDeck Studio Mini thinking this would be perfect for playback. It offers a high quality picture and control by the ATEM. But I decided to not use it as it was a huge pain trying to figure out the right export settings in DaVinci Resolve to play back properly. Most exported files I created simply refused to play, despite both the deck and the software being made by the same company. And the deck refuses to play H.264 created by anything other than itself. So the HyperDeck was out of the picture.

I then heard about PlayoutBee and thought that could solve the playback needs. Unfortunately it acted weird and Companion had a hard time keeping connected to it. Plus there were other smaller bugs that added frustration, such as the mouse pointer appearing on the screen over video. I had to attach a mouse, move it so it would disappear, then disconnect the mouse. Another odd behavior was the lack of scaling; if I played a 4×3 video, it would zoom the video so the horizontal was full width, but this meant much of the vertical portion of the video was being rendered off screen. And if I played a wider than 16×9 video, there was a huge black bar at the bottom of the screen; it didn’t center the video within the top and bottom providing a centered letterbox like we normally expect. Some H.264 files would play and others would not, despite all being encoded by Handbrake. So with the general unreliability, I decided to use VLC on a dedicated computer.

Longer term, I’m going to see how I can repurpose the Raspberry Pi 4 that I purchased to use with PlayoutBee to instead automatically run VLC in Web mode instead of needing to carry my extra full Windows computer for the task. Hopefully in the future, H2R Graphics will also have a Raspberry Pi version and that will be another computer that can get simplified.

Thankfully with much practice and prep work ahead of time, I knew when I arrived what I needed to bring, how to set everything up, and how to make everything work together. The prep time paid off with a smooth production that looked good, sounded good, and flowed smoothly for our audience.